This is the first of a two-part essay on strategic manipulations of other agents’ epistemic states. Part One lays the groundwork by tying together theories of metonyms, expression games, and signals vs. cues. Part Two lays out the epistemic axes of legibility vs. illegibility, commitment vs. flexibility, and ignorance vs. knowingness, and the situations in which it is strategically advantageous to hew to one end of these axes or another.

Abduction, All The Way Down

What is our big picture situations, as human beings—more importantly, as living organisms? We have preferences (desires, interests), premised on biology but mediated by culture. We have beliefs about the requirements for realizing these preferences—about the constraints and affordances of our environment, and our personal capacity to manipulate it—and these together guide our choices. Belief and reality are cybernetic, as CCRU-heads eagerly point out: what “is” is the product, in part, of what we believe is (and vice-versa). Our beliefs influence actions; actions influence futures, and we will feel differently about these futures in relation with our preferences. This is the garden of forking paths which preoccupied Borges, among others.

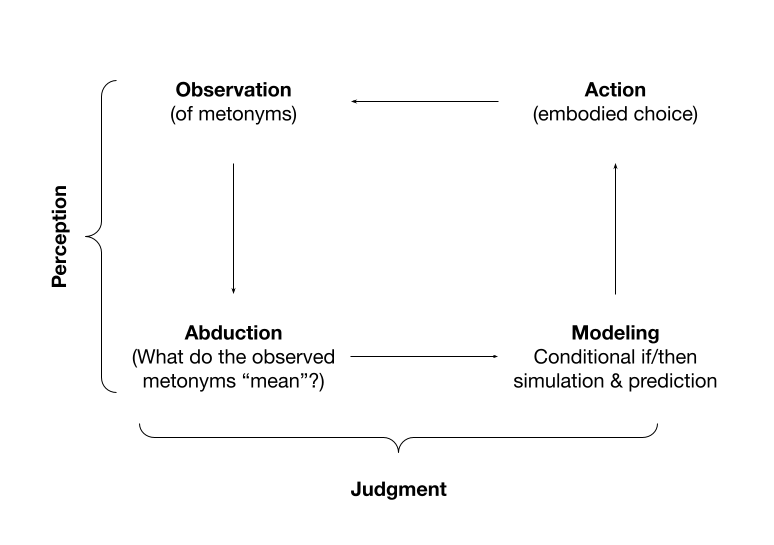

The ability to realize preferred futures, and avoid less-preferred ones, is considered by many the beating heart of intelligence. It straddles perception, judgment, and tactics: we deliberate, with anguish; we await, with anxiety; and we regret or rejoice when the future arrives, confirming or upsetting expectations. (We learn in either case, but more from surprise.) Air Force Colonel John Boyd theorized the OODA loop of strategic action: observe, orient, decide, act. After an action, the loop begins anew: one observes the effects of the action, and the new situation which the action engenders, using the information to update one’s actions in the subsequent loop. I’ll leave the basic structure in place while re-conceptualizing certain stages to be more salient to the discussion which follows:

The line between observation and judgment is fuzzy (perception involves both). They’re bridged by the abduction stage, which occurs at multiple levels of abstraction and at multiple levels of hierarchical (conscious and unconscious) processing, transforming blurs of color and light into recognized objects,[1] and then again into larger theories about the causal forces behind them, or the nature of existence. Each higher level allows greater synoptic prediction (and thereby control) at the cost of local fidelity. Perhaps I abduct (totally unconsciously) that my interlocutor has a Louis Vuitton purse over her shoulder. From here—somewhat more, but far from fully, consciously—I can make abductions as to her wealth, background, and values. Getting to these originary, causal factors sets up an array of broad expectations about their undertaken actions and their world models, even in domains seemingly far-removed from purse-land.

This abduction stage involves agents turning observations into evidence, piecing together explanatory theories. Of help in illustrating this process metaphorically is the concept of lossy compression and decompression: when there are gaps in the record, we must, in our “unpacking” of the available metonyms, somehow fill in these gaps with assumptions, which reside in our schematic understanding of the world—an understanding which is deeply statistical and associative, but ultimately pragmatic; what we are interested in is the decisions we must make on account of the situation we find ourselves in. The evidence in front of us will always be indeterminate, but it lies on a grand spectrum between determinacy to indeterminacy, and if our inferential abilities are strong we will have some half-reliable “meta” sense of just how ambiguous the information in front of us is. (But we will never know for sure; we are trapped—or empowered, depending on how one prefers to look at it—by abduction alone.)

The perceptual-analytic schema we use to identify and interpret goal-relevant metonyms is built out of experience. It takes the form of sensory associations, the statistical coincidence of sensory experiences. One piece of a sensory experience suggests a later sensory experience because we have learned they frequently appear together. These associations are theories about how the world works, ranging from the highly embodied, pre-linguistic to the highly abstract and verbal.[2] These theories meaningfully track the real regularities of the world: If our suggestion or upset, our sense of their statistical coincidence is altered; perhaps we will look for a rationale for why the given suggestion failed to hold under the present circumstance.[3]

The abductive-interpretive process is deeply contextual; Goffman calls it “discursive,” insofar as it “pertains to the general relationship of [the observed] individual to what is transpiring”—but this is not far enough. A given sign’s meaning is inevitably modified by—is indexical to—all other signs, as well as to the embedding context, which is social, physical, historical. A choice of attire (say, a piece of headgear) will be interpreted contextually, by an observer, relative to the fashion landscape in the given society, the known history of the observed, and within the larger fashion assemble that the observed has chosen (as well as their attitude and demeanor—for instance, is it “ironic” or “sincere”?).

Since we live in a highly social environment, much of our “situation,” both generally and in a given moment, is social, or more precisely, inter-agentic. This term captures the general ecological principle of our animal ancestry, a principle which we have not escaped, where, given some physical proximity, the action of each organism has direct bearing on the welfare and agency of other organisms. (In our pre-history, many of our strategic games were played against large cats.) That is, ecology engenders a strategic way of being, a macro-situation of interdependent decision-making. Just as a mudslide may hinder your advancement—is part of the environmental situation within which one attempts to optimize—other organisms are also a part of the environment. Unlike a mudslide, they too are agents, and can predict and choose, based on available information in their environments (their own theories of metonyms). This is not entirely unlike inanimate objects—a mudslide behaves dynamically with respect to its environment, too—but, crucially, the mudslide has no powers of abduction. Insofar as meaning is entailment, a difference that makes a difference, a mudslide cannot perceive meaning, can only passively be acted upon by it. There is no concept of anticipation, countermove, and predictive optimization—just pure reactivity.

This makes living organisms not just physically but epistemically manipulable: their ability to anticipate and optimize, to short-term and conditionally adapt oneself into greater fitness with the environment—is their greatest strength and greatest vulnerability. Affect their priors, or their desires and preferences, and they may make a different decision. Our expression games include but are far from limited to speech—the superset here is the manipulation of appearances and the manipulation of representations, be it by linguistic account or metonymic implication.

Since an animal uses metonymic abduction to make decisions as much as we do (though arguably with lesser sophistication), it is just as in our interests to manipulate its epistemic state—and thus, indirectly but of superceding importance, its actions—as it is to manage the beliefs of one’s employer, spouse, or rival. We may not want it to be aware of our presence, or believe us suitable food, if it poses a threat. We may wish it to think us more powerful than we are in actuality (thus, throwing rocks and shouting are advocated strategies for warding off attack by a cougar). We might avoid eye contact, so that it will not think we are challenging it. All of these are epistemic moves: the stone-throwing is not meant to actually injure the predator so much as to intimidate or discourage it. We are trying to manipulate the animal’s knowledge, beliefs, and impressions. Its preferences, we rightfully believe, are more or less fixed—we cannot easily talk it into converting to ethical vegetarianism, or recognizing the sanctity of human life. But if its actions are the product of desire and belief, and if we wish to modify those actions, our best bet lies in altering beliefs.

At the same time as I attempt to control the information and abductions available to my observer about me (be it an employer or a lion), I also attempt to abduce its own epistemic state and preferences: Has the leopard seen me? Is it hostile? Hungry? Preparing an attack? Or: does my employer believe me to be a productive, contributing employee? Does he know I’m fibbing when I call in sick? Both the lion’s fate and my employer’s fate depend on my own actions, refracted through their beliefs about my actions (past and future), just as mine depends on theirs—although of course the stakes can be highly asymmetrical.

Goffman calls this situation a “contest over assessment.” The assessing party’s interest is in “truth”—a theory of the assessed which can reliably predict their actions, allowing the assessing party maximum agentic power in optimizing around them. The leopard wishes to know in actuality whether I am edible, whether I pose a threat to its own well-being. The assessed party’s interest is in producing an impression—for the observer to abduct a theory—that is in the assessed party’s best interest, giving him maximum agentic power. (“Not edible; will put up a good fight.”) In many social settings, this strategic impression is synonymous with making the observer like, or be impressed by, you—but often we want to be negatively selected out, as in draft-dodging.

As the examples here hopefully make clear, in an interaction, both parties play the assessing and assessed party relative to one another.

Just as it can be assumed that it is in the interests of the observer to acquire information from a subject, so it is in the interests of the subject to appreciate that this is occurring and to control and manage the information the observer obtains; for in this way the subject can influence in his own favor responses to a situation which includes himself.

Goffman 1969

Whatever part of the lion’s decision-making loop I try to abduce—its preferences, capacities, decisions, epistemic states—it should be equally clear that I am not interested in the lion’s state of mind for its own sake, but that I am interested in how these parts contribute to the “final” stage of our modified OODA loop, which re-starts the process. That is, the delta in one’s “situation” before and after the action. Each transformation of one’s situation leaves one better or worse off, more or less empowered, with better and worse, and more or less options. And there is some reason to believe that serotonin and with it, mood, strongly track one’s empowerment. We’ll call this “intrinsic empowerment,” after the machine learning paradigm, [4] but we could equally refer to it as “keeping upwind.”

Flying a glider is a good metaphor here. Because a glider doesn’t have an engine, you can’t fly into the wind without losing a lot of altitude. If you let yourself get far downwind of good places to land, your options narrow uncomfortably. [5]

Disguised Signals

With this in mind, we might rightfully wonder—if we are locked in contests of appearances and assessments, a duel of theories, each player attempting to corrupt the other’s model while improving their own—why trust a rival’s self-representation at all? This has been a long debate in signaling theory, whose standard solution involves the handicap principle, or the costly signal concept: There is some inherent, expensive-to-fake connection between the larger quality and its metonymic sign, which allows the assessing party to trust the reality of its abduction. A second resolution is to propose situations of genuine coordination: either two members of an ant colony have purely aligned genetic interests, and thus no incentive to deceive, or else two genetically disparate agents have provisionally and situationally aligned interests; these may be imperfect, since there is always some conflict in such arrangements (as Schelling stresses), but there is sufficient alignment to reward broad trust over mutual suspicion.

There is a third reason that we trust the metonyms in front of us, one which is obvious but sometimes forgotten on account of the signal-cue distinction. That is, most available, assessed metonyms are not signals. They are cues. And it is the existence and abundance of cues which allow signals to hide in their midst.

To unpack this: A signal, in ethology, is information intentionally produced by an organism for its own benefit, in an attempt to manipulate observing parties. There is a subset of signals that are also advantageous for the observer, in the case of “honest” signals—if the dart frog is, indeed, poisonous, the predator would be better off finding food elsewhere, and the frog’s bright red skin is useful information to them both. A cue, meanwhile, is unintentionally emitted information which ranges in its effect on the emitting organism’s empowerment from neutral to fatal. Rustling leaves in a tree as a bird lands or takes off—potentially alerting predators—is an archetypal example of a cue.

Crucially, to the assessing observer, there is no obvious distinction between a signal and a cue. To the observer, there are only indicators, or what I have called metonyms. Intent is unknowable, and benefit is a hypothetical. There are certainly cases where we can speculate that the purpose of an observed actor’s action was, solely or primarily, to manipulate our theories of the world. (Showing off, winning over by flattery, etc.) But we are still caught, as is our mortal condition, in abduction—we can only make educated judgments, based on limited information and our own previously abducted theories of the actors’ motivations.

Rather, all that is available to us as observers is information, and the reason for the information’s existence—its motivated origins—is typically ambiguous and plural (“reasons”). Actors, in the course of living their everyday lives, are constantly “exuding expressions,” in Goffman’s language—expressions being roughly analogous to cues, where communication maps roughly onto signals—and this exuded information is made available to others, putting them at a strategic advantage. Much of this information is produced not for the sake of appearances—to manipulate observers—but emerges as the byproduct of other goals. (Mosquitoes use CO2 to locate mammals to bloodsuck, but mammals do not release CO2 as a signal to attract them.) But whether or not it was produced for “extrinsic” reasons—that is, to create an impression and alter observers’ theories of us—or for “intrinsic” reasons—that is, rationales which would persevere even in unobserved privacy—we on the outside can rarely tell. This motivational ambiguity provides cover for signals to be taken seriously.

Let us take as an example a classic ambush strategy. A few members of Team A, aware of the location of members of Team B, strategically allow themselves to be sighted in the forest; once sighted, they flee. The Team B members, outnumbering their fleeing rivals, give pursuit—only to stumble into a clearing surrounded by the full strength of Team A, and here forced into surrender. We—and they—know now that the appearance of the initially sighted Team A members, and their flight into the forest, was a signal—an attempt to manipulate Team B members’ behavior to Team A’s advantage. But at the time, it was perfectly reasonable to assume that their initial flight was motivated by self-preservation instincts and fear. We can call these disguised signals, signals masquerading as cues.[6] One’s interpretation of the metonyms at-hand depends heavily on frequency: how often are such acts done for intrinsic purposes (survival) versus extrinsic reasons (ambush)? Putting this in the language of pragmatic decision rules: given an observed metonym, how often does a course of action (e.g. pursuit) result in desirable vs. undesirable outcomes?

Or we might consider gaze. Humans are extraordinarily talented at tracking and monitoring gaze; this skill is a foundation of our social life, assisting us in grokking an implicit subject (gaze constrains the range of possible referents) or synchronizing our behavior with a partner. But we do not just gaze to produce a certain belief of behavior in observers—most of our gaze is intrinsically functional, helping us take in further sensory information, observe more metonyms, and thus make better assessments of our situation. The majority of our gaze is private, rather than noticed; its effects are one-way instead of cybernetic (there is no change in reality when one gazes at a fridge and frying pan, or even at an insect). Gaze is not typically designed to produce an effect, but only to perceive—and yet it often does. There are clear cases in which we strategically direct our gaze, as in flirting or athletic feints. “Watch their hips, not their eyes” is common advice in basketball for playing effective defense.

When certain indicative cues become commonly recognized as frequent intentional tactics, their status changes—in flirting, initiating eye contact can be read as a kind of proposition, more than merely the expression of interest; the same is true of repeatedly meeting initiated eye contact, and its interpretation as some form of acceptance of the implicit proposal. We see here the clear pipeline by which mere cues, or expressions, can transform into reliable signals, or means of communication when common knowledge as to the “meaning” of these expressions emerges. At the same time, this common knowledge means that parties have clear incentive to strategically fake such information. In other words, it is individuals’ knowledge of how a cue will be (reliably, relative to an audience) reacted to, combined with voluntary control over the production of that cue, which transforms it into a viable signal. And when one player knows another player knows that an indicator will elicit a reaction, he knows that he can be “played” or exploited by disguised cues.

Insofar as the [slave catcher’s] behavior is relevant to the escaping slave’s project of evading capture, certain inferences and actions by the catcher/tracker are preferable, for the tracked party, to other ones. So: where “reading” a scene means an action-oriented perception of entailments, the tracked person might create—i.e. “write”—a scene so as to create, in the tracker, a reading. The syllogism is straightforward: the function of a reading is to inform action; the function of a writing is to inform a reading; and thus the indirect function of a writing is to inform action.

What allows the tracked person to write a scene? Two things: an understanding of statistical correlations, e.g. between the orientation of tracks and the direction of movement, and an understanding that the tracker is cognitively similar (i.e. a fellow prediction or inference machine), and can and will leverage these associations to make inferences. From here, in order to achieve a goal—to evade the tracker—the tracked may attempt to produce false inferences in the interpreting tracker. The tracked may double back, retracing steps in reverse, or snap branches off in the wrong direction. Understanding which “indicators” metonymically “testify” to some larger, invisible reality (here temporally, rather than spatially, invisible, since the behavior of interest to the tracker—the presence and movement of the tracked—occurred at a different time) the tracked is able to create a testimony that differs from reality, to produce an inference that is false—in a word, to deceive.[7]

We can think of this as the stabilization of meaning. As the meaning of an indicator (or “symbol,” or “sign”—a metonym) stabilizes in common knowledge, its manipulative efficacy changes; it weakens as a reliable indicator, because it can be easily “free-ridden” by mimics. This is one possible explanation for the well-documented fashion cycle of innovation, increasingly widespread adoption, and eventual abandonment.

Similarly, one orders dinner out of a mixture of intrinsic and extrinsic motivations. Short of obvious tells, like mispronouncing the name of the entree, or putting on great if transparent pretensions, how is a dinner partner to guess which motivation, extrinsic or intrinsic, guides a meal order? And, short of such a severe differential in status that the opinion of the observer has no effect, whatsoever, on the situation of the ordering party, there will always be some constraining of one’s actions by extrinsic reasoning—one can imagine dishes that would be reputationally destructive, and thus avoided. Similarly, there are many situations where, although a dish might slightly improve the impression one agent holds of another, the intrinsic cost to the ordering party (perhaps he is repulsed by the entree) will remove that dish from consideration. We might say that, in human social life, there are only three types of moves:

- Actions done for intrinsic reasons, but altered or constrained in expression by extrinsic considerations.

- Actions done for extrinsic reasons, but altered or constrained in expression by intrinsic considerations.

- Private actions.

(One might object that there are situations in which the impression of an observer is fully inconsequential, but that is almost or never the case in human social life. Even the mightiest in our societies can be toppled by making admissions to illegality, or by acting in such a way that might lead to violence, etc.)

In other words, all actions undertaken in public (or in an ecology of mutual visibility) are motivated by a mixture of intrinsic and extrinsic motivations, and produce a combination of intrinsic and extrinsic effects, updating both the physical world and also the epistemic states and beliefs of those around us. Unteasing intrinsic and extrinsic motivations, considerations, and constraints is difficult even among those who know each other. An action is definitionally worth performing iff the intrinsic benefits outweigh any extrinsic costs (or vice-versa). Thus extrinsically costly actions can be a strong indicator to observers of the action holding intrinsic benefit for the actor, just as intrinsically costly actions can be a strong indicator of extrinsic benefit. (Hence why exceptional dedications of energy or resources, or undergoing extremely unpleasant experiences, is a strong signal of one’s loyalty to a group; see initiation and hazing ceremonies, and the absurd beliefs to whom mandatory lip service is seen as strengthening, rather than weakening, cult affiliation.)

Notes

[1]:

Thomas Kuhn 1976: “What a man sees depends both upon what he looks at and also upon what his previous visual-conceptual experience has taught him to see. In the absence of such training there can only be, in William James’s phrase, a bloomin’ buzzin’ confusion.”

[2] This model is sometimes called “theory theory” in development psychology/cognitive science. Theory theory is sometimes opposed to “simulation theory”—the idea that we make decisions and understand the world by simulating it with our imagination—but the ideas are perfectly compatible. As Goffman writes, of G. H. Mead’s work:

[W]hen an individual considers taking a course of action, he is likely to hold off until he has imagined in his mind the consequence of his action for others involved, their likely response to this consequence, and the bearing of this response on his own designs. He then modifies his action so that it now incorporates that which he calculates will usefully modify the other’s generated response. In effect, he adapts to the other’s response before it has been called forth, and adapts in such a way that it never does have to be made.

[3] At the species level, organisms which consistently failed to predict the real workings of their world—at least, those parts of the world relevant to their survival—would die out. There is, necessarily, a close correlation between the monkey’s perception of the branches, as it swings through trees, and the branches’ actual spatial relationship—otherwise, the monkey would tumble to its death.

[4] “[A]ll else being equal, according to Klyubin, agents should maximise the number of possible future outcomes of their actions. “Keeping your options open” in this way means that when a task does arise, one is as empowered as possible to carry out whatever needs to be done to complete it. Klyubin et al. present the concept nicely in two aptly titled papers: “All Else Being Equal Be Empowered” and “Keep Your Options Open: An Information-Based Driving Principle for Sensorimotor Systems”. Since then, a lot of exciting work in robotics and reinforcement learning have used and extended the concept.” (Chris Marais, “Empowerment as Intrinsic Motivation”)

[5] Paul Graham

[6] There are also cues that are interpreted as signals, that is, intentionality-attributed cues.

[7] “The Meaning of Meaning,” §1.1

Leave a comment